Vise Coding in Practice: Structured AI Development Across 5 Autonomy Levels

with Dr. David FaragóThis live session walked through five levels of AI coding autonomy in Java, but the real takeaway was simpler: sustainable AI development depends on small reviewable steps, explicit specs, and deterministic quality gates.

YouTube

Vise Coding in Practice: Structured AI Development Across 5 Autonomy Levels

Load YouTube video

This video is embedded from YouTube and will only be loaded after your consent. When loading it, personal data may be transmitted to YouTube or Google and cookies may be set.

More details are available in the privacy policy.

Session Timeline

- 00:00Intro video

- 00:10Introduction

- 01:20AI coding autonomy levels

- 13:14What Vise Coding means

- 21:24Demo 1, BDD challenge

- 44:40Question, agent isolation

- 51:08Demo 1, continued

- 01:10:40Static analysis and quality checks

- 01:26:09Spec-Driven Development

- 01:42:30Demo 2, Resilience4J reduce flap

- 01:54:08Comparing agents

- 02:13:43Conclusion

- 02:21:45Finishing demo 2

Dr. David Farago joined me for a session about Vise Coding and the five levels of AI coding agent autonomy, from simple completion up to fully autonomous development agents. The useful part was not deciding which agent is best. It was seeing where AI workflows stay reviewable, and where they start to drift.

Why This Session Mattered

The session started with a useful frame: autonomy is not binary. There is a real spectrum between token completion, block completion, intent-based chat agents, local autonomous agents, and fully autonomous development agents.

That matters because the review burden changes with each level. More autonomy can mean more speed, but it can also mean bigger batches of code, wider diffs, and less clarity about what actually changed.

For me, that was one of the central lessons of the stream: reviewability is a core part of Vise Coding.

At higher autonomy levels, though, the priority shifts a bit. You still want code changes to stay understandable, but the stronger control point becomes keeping quality high through deterministic guardrails like quality gates and static analysis.

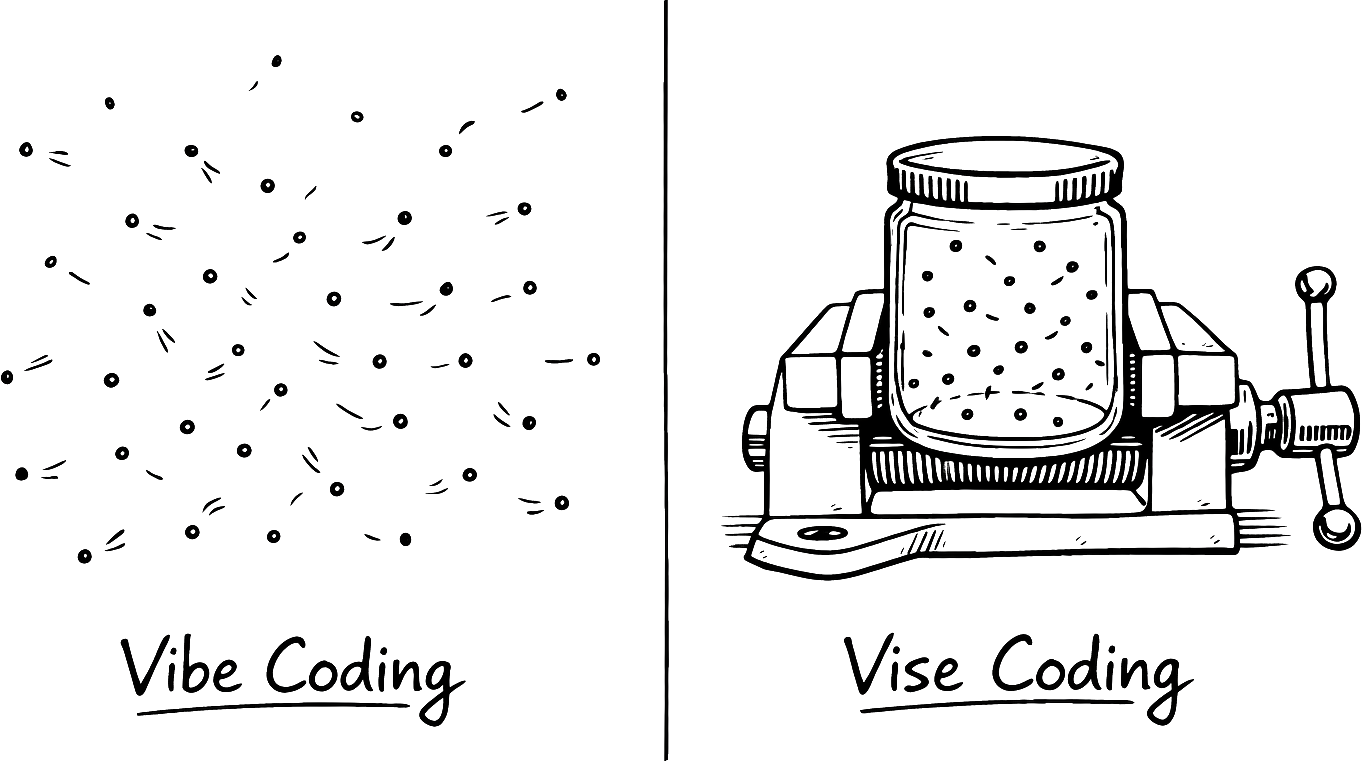

Where Vibe Coding Breaks Down

This is also where vibe coding starts to struggle.

If the model produces too much code at once, you stop reviewing properly. At that point, you are no longer steering the change. You are mostly trying to catch problems after the fact, and that does not scale well in a real project.

The live demos made that risk visible. Once the step size gets too large, the workflow becomes harder to reason about, harder to verify, and harder to maintain.

What Vise Coding Adds

Vise Coding is the counterproposal: plan the change, keep the step size small, and make every step easy to review.

In the session, that meant working from explicit specifications, splitting the workload into smaller units, and relying heavily on automated tests. BDD-style workflows fit that model naturally because they keep expected behavior visible while the code changes underneath.

That is close to what I described earlier in Guided Coding instead of Vibe Coding in Java. Both approaches favor structure over improvisation. What stood out here was how strongly Vise Coding tied that structure to automated tests and to small, checkable increments.

Higher Autonomy Still Works, but It Gets Harder

One of the more important takeaways was that Vise Coding does not stop working at higher autonomy levels. You can still apply it when agents do more of the work.

The problem is that it becomes harder to preserve the same discipline. As autonomy goes up, the steps often get bigger. Bigger steps mean more code to inspect, more context to hold in your head, and more room for subtle regressions.

That is exactly where the emphasis starts to move. Instead of depending mainly on humans to review every detail, you need a workflow that keeps producing high-quality code through automated checks, quality gates, and static analysis tools.

The question around agent isolation pointed in the same direction. If agents get more freedom, the surrounding environment needs clearer boundaries too. That is where sandboxes and controlled execution environments start to matter more.

Specs Matter Even More in Larger Systems

The Spec-Driven Development part of the stream was especially relevant for larger or older codebases.

If the specification stays current and code is created from it, the system remains easier to understand over time. That is useful in any project, but it matters even more in legacy systems where code often outlives the original reasoning behind it.

This also connects well to externalized guidance like AGENTS.md. If the operating model is written down, both humans and agents have a clearer contract to work from.

The Specific Agent Matters Less Than the Workflow

The comparison between agents was a useful reality check.

Codex, GitHub Copilot, Claude Code, and similar tools still differ, but the gap feels less durable than it used to. Good ideas move quickly across products, especially around agent behavior and developer workflow features.

That makes the process around the agent more important than the agent name itself. The durable advantage is not the logo. It is whether the workflow keeps changes understandable, testable, and easy to correct.

Deterministic Guardrails Become More Valuable

That is why static analysis, quality checks, and automated tests keep becoming more important.

They give you a deterministic quality bar even when the model is not deterministic. That point came through clearly here, and it lines up with the earlier Live Vibe Coding Battle, where PMD, SpotBugs, JaCoCo, Trivy, and OWASP ZAP made the difference between code that looked fine and code that actually held up.

This is the part of AI development that I expect to age well: not blind trust in larger models, but stronger guardrails around whatever model is currently in use.

Useful Links

- Slides: Session slides

- Vise Coding article by David Farago: Original Vise Coding article

- Demo 1 repository, Microsoft Copilot Hackathon BDD challenge: BDD challenge repository

- GitHub Spec Kit: Specification workflow starter kit

- Kiro: Spec-driven IDE preview

- Demo 2 repository, Resilience4J: Resilience4J demo repository

- Docker Sandboxes: Containerized sandbox environments

- Grith: AI sandbox platform

- Sprites: Cloud sandbox tooling

- Matchlock: Sandboxing for coding agents

- Firecracker: Lightweight microVM isolation

- Modal Sandboxes: Ephemeral code sandboxes

- Daytona: Secure dev sandboxes

- AI4JVM: AI for JVM engineering

- Memory upgrade for AI agents, beads: Persistent agent memory experiments

- Avoid Losing Work with Jujutsu (jj) for AI Coding Agents: jj workflows for agents

- jj-benchmark: jj benchmark results

- Jujutsu: Modern version control

- AGENTS.md template: Agent operating model template

Final Thought

This session did not make the case for giving agents unlimited freedom. It made the case for building a workflow that stays understandable as autonomy increases.

If you keep the specs explicit, the steps small, and the quality gates strict, AI can be a serious engineering tool in a Java workflow. If you skip those things, the review burden catches up very quickly.

Comments

Load comments from GitHub optionally

The comment section is provided via Giscus and GitHub Discussions. It will only be loaded after your explicit consent. When loading it, personal data such as your IP address and technical metadata may be transmitted to GitHub, and cookies or similar technologies may be set.

Please confirm first before loading the comment section.